I remember the exact moment the “AI magic” became a business reality for me. I was scrolling through thousands of photos on my phone, looking for a specific shot of my daughter, Anjali, from years ago. I typed a few words, and there she was—the image magically sharpened, the background distractions gone. It felt like a miracle.

But as a global MARCOM chief executive, my second thought wasn’t about the magic. It was about the math. Behind those few seconds of search was a massive orchestration of compute, energy, and capital.

AI has a new currency: tokens. Every interaction with a large model—every prompt, every generated line of code, every translation, every summarization—consumes tokens. Behind those tokens are compute, memory, bandwidth, and energy.

If we’re serious about “democratizing AI,” we have to get serious about who can afford tokens, and at what cost.

Recently, MediaTek’s CEO put it simply: we want people to have access to as many tokens as possible at the lowest possible cost. That’s the starting point for democratization.

Tokens as the Currency of Intelligence

Why talk about tokens at all? Well…because they give us a practical way to think about AI access. In the traditional economy, we track dollars and cents. In the AI economy, the fundamental unit of value is the token. Every word generated, every image analyzed, and every decision made by a model is a transaction measured in tokens.

Tokens are essentially the currency of AI. The intelligence unleashed through AI, or the access to that intelligence, should not just be in the hands of a few.

An AI-native future is one where:

- A small business can afford to run continuous assistants.

- A student in a developing market can get real-time tutoring in their own language.

- A doctor can query clinical-grade models at the bedside without worrying about the cost of each interaction.

For that to be possible, the cost per token has to drop dramatically, and the energy per token must be tightly optimized.

That’s where companies like MediaTek come in.

Tokens per TCO and Tokens per Watt

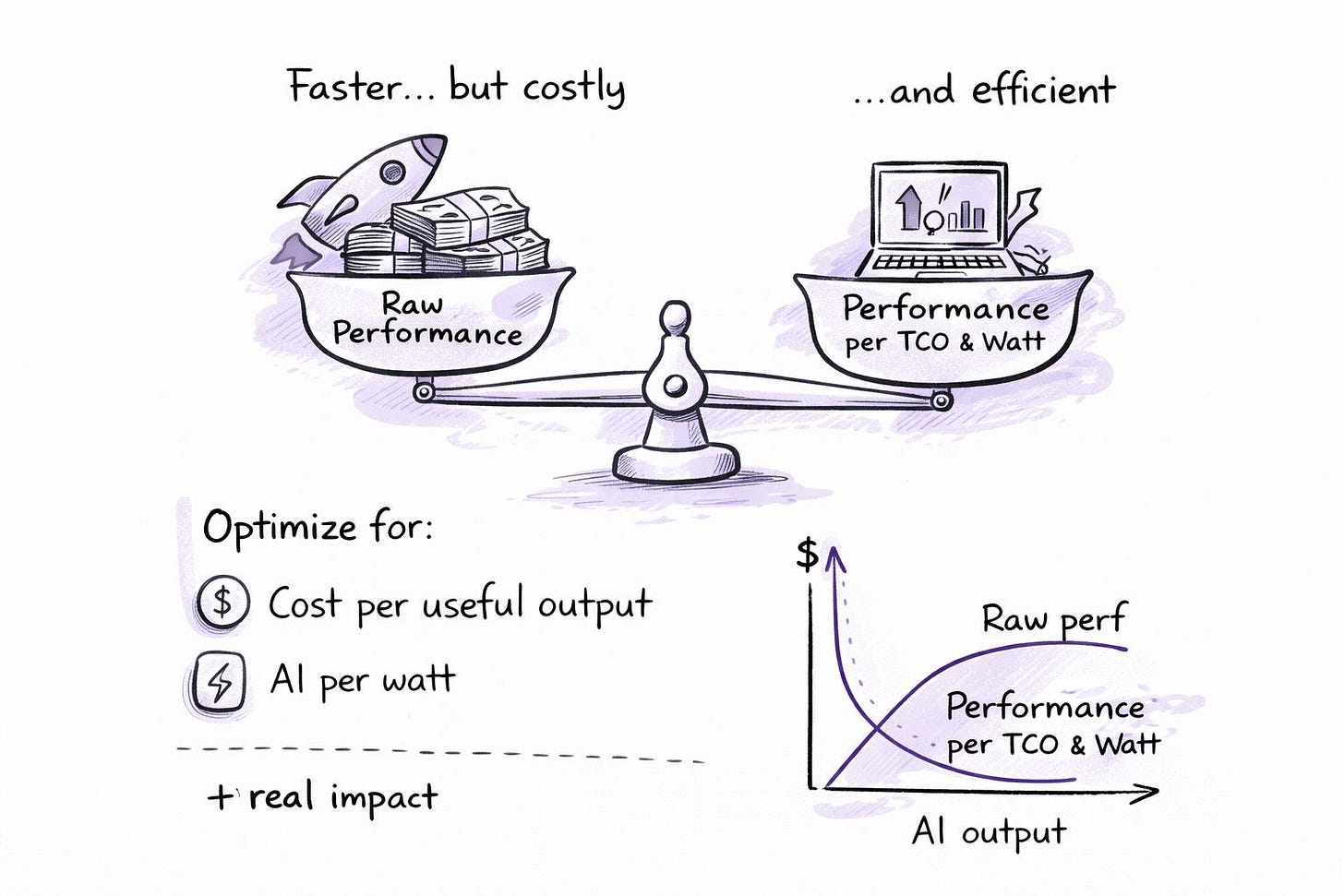

Tokens rely on compute performance efficiency (image generated by GAI tools)

Tokens rely on compute performance efficiency (image generated by GAI tools)

This is where the conversation usually dies in the marketing department but thrives in the boardroom. Total Cost of Ownership (TCO) is the only metric that matters for global adoption.

If your AI solution is powerful but requires a liquid-cooled server rack to run a basic query, it isn’t “democratized.” It’s a luxury good. At MediaTek, we focus on a different set of KPIs: Tokens per TCO and Tokens per Watt.

Why? Because power is the ultimate constraint. (Ouch—just ask any data center manager trying to find another 50 megawatts of capacity).

Scaling AI to the next billion users requires us to optimize for the “invisible” costs. Every milliwatt saved at the chip level translates to millions of dollars saved at the enterprise level. We aren’t just building faster processors; we are building more profitable ones. Performance per Watt is the true North Star for anyone who wants their AI strategy to survive a budget review.

Case Study: Chromebooks and AI at the Edge

You can already see this play out in concrete products.

In AI Chromebooks, MediaTek has taken a leadership position. We recently launched an SoC that powers the Lenovo Chromebook Plus/Ultra, a high-performance AI-capable device.

Here’s what matters in that example:

- The device hits competitive or better performance for AI workloads.

- It retails around $700, not $2,000+.

- It’s built on an architecture optimized for efficiency, not just peak numbers.

Multiply that kind of design across millions of units, and you’re not just selling laptops; you’re changing who can reasonably access AI tooling in education, small business, and emerging markets.

Mobile as a Democratization Engine

The same story is true in mobile, where we’ve spent decades.

Our flagship smartphone platforms compete toe-to-toe with any player in the Android ecosystem. Yet OEMs can ship devices under $1,000. In the mid-range, AI-capable smartphones are landing in the $400–$800 band.

We’ve been a critical part of the global gadget adoption and smartphone revolution, enabling billions of users to access information and intelligence in their palms.

This is democratization in action:

- Billions of users are getting connectivity, compute, and now AI in their pockets.

- OEMs can profitably serve those segments.

- A global ecosystem of apps and services building on that installed base.

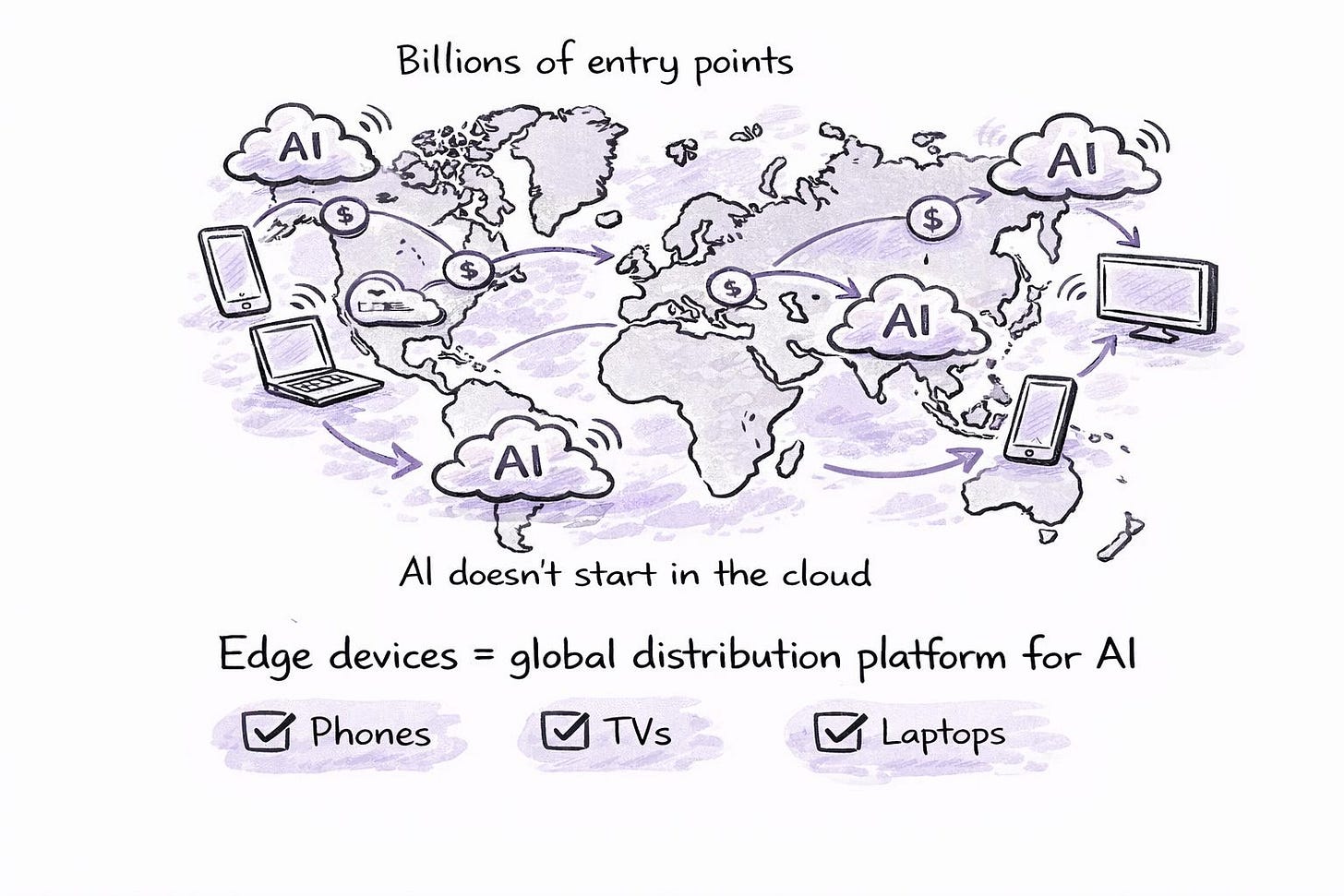

If your AI strategy ignores mobile and other edge devices, you’re missing the most powerful distribution channel for affordable intelligence the world has ever seen.

The Era of the AI Data Center and Token Economy

The Era of Hybrid AI Tokens. Image generated by GAI Tools

The Era of Hybrid AI Tokens. Image generated by GAI Tools

The cloud isn’t going away, but its role is shifting. We are moving toward a hybrid reality where the “Heavy Lifting” (training massive models) stays in the data center, while the “Everyday Inference” moves to the edge.

This hybrid model is the only way the token economy survives. If every “smart” toaster and “AI” doorbell has to talk to the cloud for every basic task, the global energy grid will buckle.

The data centers of the future will be the “Reserve Banks” of intelligence—storing and refining the core models. But the local devices will be the “Retail Branches” where the actual transactions happen. This distribution of compute is the only way to keep the cost-per-token low enough to fuel widespread corporate growth.

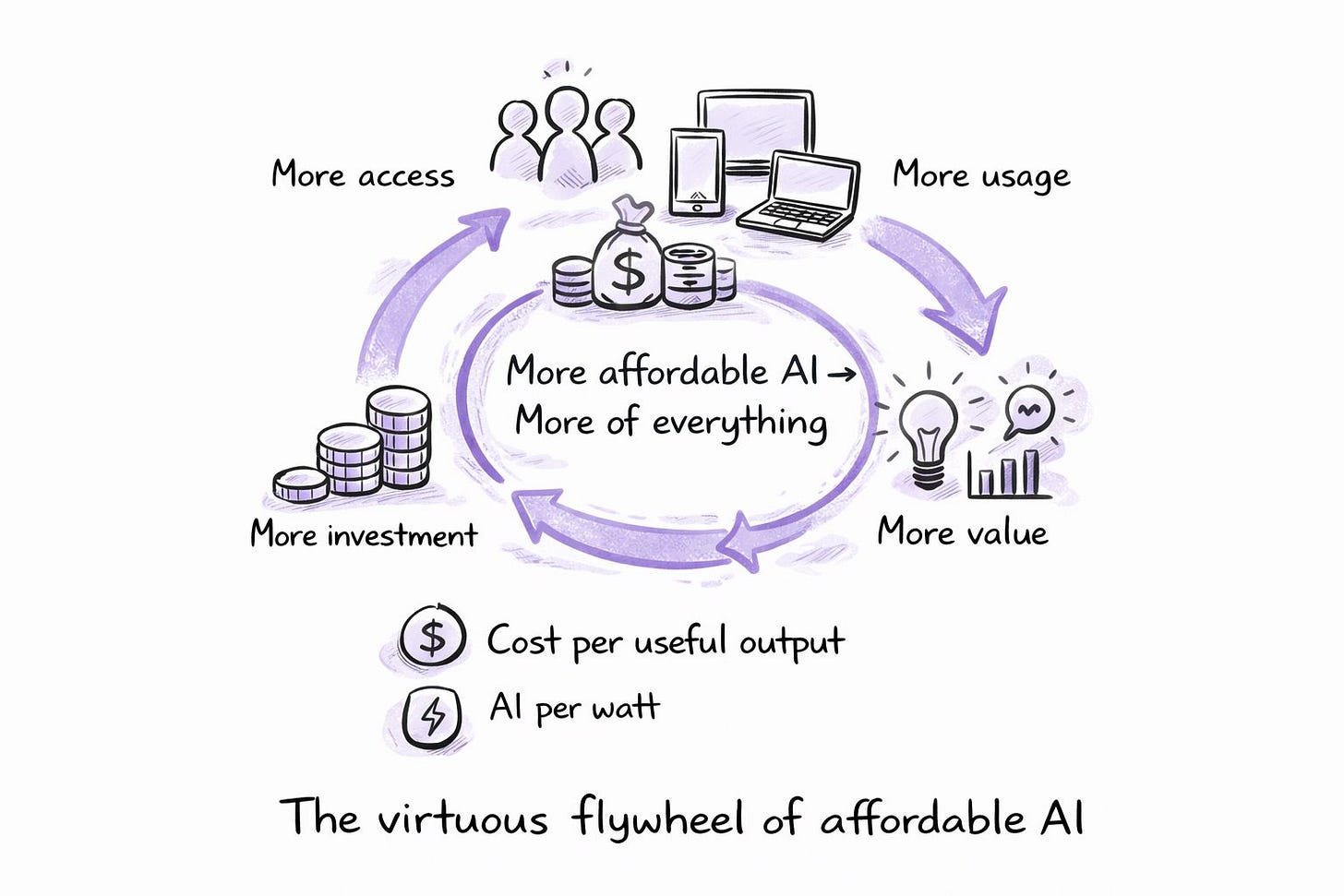

The Virtuous Flywheel of Affordable AI

The virtuous flywheel of affordable AI. Image generated by GAI tools

The virtuous flywheel of affordable AI. Image generated by GAI tools

When tokens become cheaper and more abundant, you get a virtuous flywheel:

- More people and organizations can afford to use AI.

- They apply it to productivity, creativity, and problem-solving.

- The resulting gains generate economic benefit, which fuels further adoption.

- That adoption justifies more investment in infrastructure and optimization, driving costs down again.

The more people have access to AI, the more they will use AI and enhance their productivity. The more enterprises that use AI to solve chronic problems like in healthcare, the more lives we enrich, and the more people will benefit and use more AI tools. That’s the virtuous flywheel.

For executives, the key question is: Where do you plug into this flywheel?

- Are you building infrastructure that lowers the cost per token?

- Are you designing applications that assume cheap, abundant tokens?

- Or are you locked into models that only work when tokens are scarce and expensive?

Democratization Requires the Whole Stack

Of course, MediaTek is only one part of a larger ecosystem.

The large frontier model companies have to do their part. PC OEMs have to do their part. Smartphone OEMs, data center solution providers… It’s a much bigger ecosystem, but we see our role very clearly.

Democratizing AI at scale requires:

- Model providers are optimizing architectures and training methods for efficiency.

- Cloud and data center players are driving innovation in cooling, networking, and power efficiency.

- Device manufacturers integrating AI capabilities in ways that make sense for real users, not just spec sheets.

- Connectivity and chipset providers like MediaTek are driving down the cost and energy per unit of useful AI work.

The companies that understand their position in this value chain of democratization—and optimize relentlessly for it—will define the next decade.

Measuring Success Beyond Benchmarks

Benchmarks will always matter. But if we want to measure whether we are truly democratizing AI, we need to look at a different set of metrics:

- Cost per meaningful AI interaction (per user, per business, per month).

- Energy per unit of AI work at different layers (device, edge, cloud).

- The number of users and organizations that can realistically use AI daily, not just test it.

- Geographic and socioeconomic distribution of AI access.

The true metric will eventually come down to how much performance we can deliver at the lowest cost of ownership and the lowest energy utilization.

If we get that right, tokens won’t just be the currency of AI. They’ll be the currency of a more equitable, productive, and intelligent future. We have to stop measuring success by how “smart” the AI is in a lab. We must measure it by how affordable it is in the wild.

Are you measuring the raw intelligence of your AI tools, or are you looking at the TCO of your innovation? What’s the metric that really drives your growth today? Let’s talk in the comments.

About the author

With over three decades of experience in marketing, communications, and business development, Rahul Sandil is a global marketing leader passionate about building brands, empowering teams, and delivering impactful results across industries. As Vice President and General Manager of Global Marketing and Communications at MediaTek, he serves as the company’s chief marketing and communications executive, overseeing global brand strategy, marketing innovation, strategic partnerships, and corporate communications that connect leading-edge technology with audiences worldwide.

Previously, Rahul held leadership roles at Micron Technology, where he was responsible for corporate marketing, as well as at other global organizations in the technology, digital media, and entertainment sectors, including Microsoft, Amazon, HTC, and others. As a board advisor, mentor, and consultant, he has leveraged his expertise in AI, emerging technologies, and advanced digital marketing to help companies achieve sustainable growth.

Rahul is passionate about creating customer-centric experiences that connect communities with technology. He believes in the power of storytelling, creativity, and data to drive business outcomes and social impact. He is also an avid geek and usually the first to adopt new consumer tech products. To read more about Rahul’s thoughts on AI, Marketing, and Leadership, check out his blog, connect with him on LinkedIn, subscribe to his Substack newsletter, or follow him on Medium.

Disclosure: The author leverages Generative AI platforms to support content research, copywriting, and editing for his blogs. All ideation, outlines, and strategic insights are the authors’ own.

FAQ: Strategy, Tokens, and TCO

What is the “Token Economy” in Artificial Intelligence?

The token economy refers to the shift where intelligence is treated as a measurable commodity. Every AI-generated output has a specific unit cost measured in tokens. For businesses, tokens are the new currency of productivity. If the cost of generating a token exceeds the value it creates, the AI strategy is economically unviable.

Raw performance is a vanity metric. Total Cost of Ownership (TCO) is the boardroom reality. TCO includes the hidden expenses of AI: energy consumption, cooling, latency, and cloud subscription “taxes.” High-performance AI that is too expensive to operate at scale is a liability, not an asset. (Ouch—but true).

Power is the ultimate constraint of the digital age. Performance per Watt measures how much intelligence a chip can deliver for every unit of electricity consumed. In a world of limited data center capacity and mobile battery life, efficiency is the only way to scale AI to billions of users without crashing the grid or the P&L.

What is the difference between Edge AI and Cloud AI economics?

Cloud AI requires a recurring “tax” to ping a remote server for every query. Edge AI processes intelligence locally on the device (like a smartphone or Chromebook). By moving inference to the edge, companies eliminate cloud latency and recurring compute costs. This shift turns AI from a variable expense into a built-in utility.

How does AI democratize technology in emerging markets?

Democratization happens when the “cost per useful output” drops low enough for mid-range devices. When AI-capable processors are integrated into affordable smartphones and Chromebooks, high-level computing becomes accessible to users in India, Africa, and beyond. Affordability is the only true engine of global digital inclusion.

Why should a CMO care about AI unit economics?

The modern CMO is the Chief Customer Advocate. If marketing deploys AI tools that are too costly or inefficient for the user to sustain, they are destroying shareholder value. To drive corporate growth, the CMO must ensure the “magic” of the brand is supported by the “math” of the infrastructure.

What is the “Virtuous Flywheel” of affordable AI?

The flywheel begins with optimized, low-cost architecture. Lower costs drive increased access. Increased access leads to higher usage and more data. More data creates higher value, which fuels further investment into even more efficient technology. This cycle is the only way to move AI from a niche luxury to a global standard.

Related